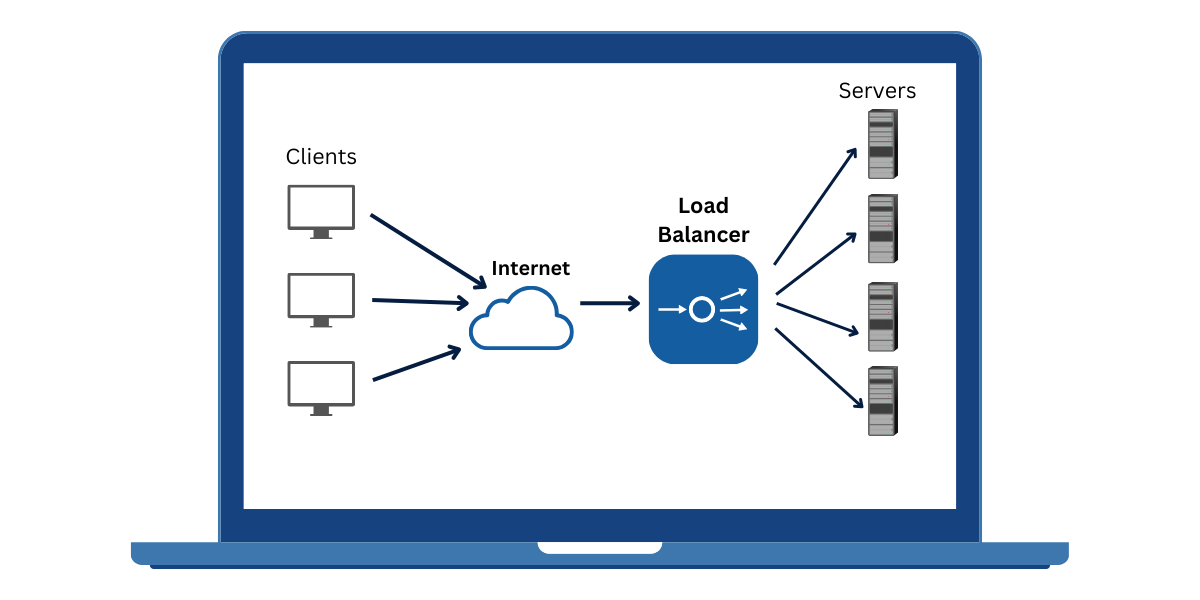

SSL termination (also called SSL offloading) is the process of decrypting HTTPS traffic at a load balancer before passing unencrypted requests to backend servers. This removes the SSL/TLS processing burden from individual servers, improves performance, and centralises certificate management in one place.

Load balancers from AWS, Cloudflare, Nginx, HAProxy, and F5 all support SSL termination as a standard deployment pattern. As of 2025, approximately 90% of web traffic is encrypted using SSL/TLS — making centralised termination a practical necessity rather than an optional optimisation.

What Is SSL Termination?

SSL termination is the point in a network where an encrypted TLS connection ends and the payload continues in plain HTTP. The load balancer decrypts the client’s HTTPS request, reads the HTTP headers, routes the request to the correct backend server, and returns the response — either unencrypted internally or re-encrypted before delivery. When termination happens at the load balancer, backend servers never see raw TLS traffic.

The terms “SSL termination” and “SSL offloading” mean the same thing in practice. Both describe moving TLS decryption work away from backend application servers. The older term “SSL” persists in documentation and tooling, even though modern deployments use TLS 1.2 or TLS 1.3 exclusively.

How SSL Termination Works Step by Step

The process is sequential and happens entirely at the network edge before any backend server receives a request. Understanding each step clarifies why the load balancer, not the server pool, holds the SSL certificate and private key.

- Client sends an HTTPS request on port 443 to the load balancer’s public IP.

- Load balancer performs the TLS handshake, presenting its certificate to the client’s browser.

- Browser validates the certificate against a trusted CA; an encrypted session key is agreed upon.

- Load balancer uses its private key to decrypt the inbound HTTPS payload into plain HTTP.

- Decrypted HTTP request is forwarded to the selected backend server on port 80 (or a private HTTPS port for re-encryption).

- Backend server processes the request and returns an HTTP response.

- Load balancer returns the response to the client – optionally re-encrypting it for transit.

Step 2 is where the CPU cost lives. A single RSA-2048 handshake can consume up to 10ms of CPU time according to OneUptime’s 2026 load balancer SSL termination guide. Offloading that work to a dedicated load balancer frees backend servers to focus on application logic.

SSL Termination vs SSL Passthrough vs SSL Bridging

SSL termination, SSL passthrough, and SSL bridging are three distinct ways to handle HTTPS traffic at a load balancer. The right choice depends on whether end-to-end encryption is required, whether the internal network is trusted, and whether Layer 7 features such as WAF filtering or content routing are needed.

| Feature | SSL Termination | SSL Passthrough | SSL Bridging | Best For |

| Where decrypted | Load balancer | Backend server | LB + backend | — |

| Backend traffic | HTTP (unencrypted) | HTTPS (encrypted) | HTTPS (re-encrypted) | — |

| Certificate location | Load balancer only | Backend server | Both | — |

| Performance | Best | Lower | Moderate | — |

| Best for | Public web apps | E2E encryption needed | Compliance environments | — |

SSL bridging re-encrypts traffic between the load balancer and backend servers. It adds CPU overhead compared to termination but satisfies compliance environments where unencrypted internal traffic is not permitted. For a detailed breakdown of all three modes, see the guide to SSL passthrough vs SSL termination.

How to Configure SSL Termination (Platform Guides)

Each platform follows the same core logic: bind port 443 with a TLS certificate, then forward decrypted traffic to backends on port 80. The specifics differ in where you store the certificate, how you select TLS version policy, and how certificate renewal is automated.

SSL Termination on AWS Application Load Balancer (ALB)

AWS ALB terminates TLS at the load balancer layer and passes HTTP to target groups. AWS Certificate Manager (ACM) handles certificate storage and renewal automatically. Proper TLS version selection is controlled via security policy attached to the HTTPS listener.

- In the AWS Console, open EC2 > Load Balancers and select your ALB.

- Add an HTTPS listener on port 443 and attach a certificate from ACM.

- Choose a security policy – use ELBSecurityPolicy-TLS13-1-2-2021-06 to enforce TLS 1.2+ minimum.

- Set the default target group to HTTP, port 80.

- Verify the setup: run curl -I https://yourdomain.com and confirm the response headers show the ALB.

SSL Termination on Nginx

Nginx acts as both a reverse proxy and a TLS termination point. Place the certificate and private key on the Nginx server, and proxy plaintext traffic to backend upstreams.

A minimal nginx.conf block for SSL termination:

server {

listen 443 ssl http2;

ssl_certificate /etc/nginx/ssl/yourdomain.crt;

ssl_certificate_key /etc/nginx/ssl/yourdomain.key;

ssl_protocols TLSv1.2 TLSv1.3;

ssl_ciphers ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES128-GCM-SHA256;

add_header Strict-Transport-Security "max-age=63072000" always;

location / { proxy_pass http://backend_pool; }

}Enable OCSP stapling and set ssl_session_cache to reduce handshake overhead on repeat connections. Certbot with the certbot-nginx plugin handles Let’s Encrypt certificate renewal automatically.

SSL Termination on HAProxy

HAProxy requires the certificate and private key to be combined into a single .pem file before binding to the HTTPS frontend.

frontend https_in

bind *:443 ssl crt /etc/haproxy/certs/yourdomain.pem

default_backend app_servers

backend app_servers

server web1 10.0.1.10:80 check

For certificate renewal, replace the .pem file and run systemctl reload haproxy. No downtime is required. Set ssl-default-bind-options ssl-min-ver TLSv1.2 in the global block to enforce minimum protocol versions.

SSL Certificate Management at the Load Balancer

One certificate lives at the load balancer and covers all backend servers behind it. This is the primary management advantage of SSL termination – renewal updates a single location rather than every node in a server pool.

When choosing a certificate type for load balancer termination, consider these options:

- Single-domain DV certificate: Suitable for one public hostname, fastest to issue.

- Wildcard SSL certificate: Secures *.yourdomain.com, ideal for environments with multiple subdomains routed through the same ALB or Nginx instance. Read the full guide to wildcard SSL certificate management before selecting this option.

- SAN/Multi-domain certificate: Covers multiple hostnames in a single cert, useful for ALBs serving several domains.

AWS ACM renews certificates automatically with no manual intervention. For Nginx or HAProxy deployments, Certbot’s cron job handles Let’s Encrypt 90-day renewals – run certbot renew twice daily and reload the service to apply the new certificate without downtime.

Is SSL Termination Secure?

SSL termination at the load balancer is secure when the internal network segment between the load balancer and backend servers is isolated and trusted. The decrypted HTTP traffic travels only within that private segment – it is never exposed to the public internet.

Three mitigations address the risk of unencrypted internal traffic:

- Private network isolation: Place load balancer and backends in a dedicated VPC or VLAN with no external routing.

- SSL bridging: Re-encrypt traffic from load balancer to backend using an internal certificate. Adds CPU overhead but eliminates plaintext internal hops.

- Mutual TLS on backend segment: Require client certificates between the load balancer and each backend server. See the guide to mutual TLS on backend servers for configuration steps.

PCI-DSS compliance is achievable with SSL termination provided the internal network qualifies as a secure transmission medium. According to AWS’s official NLB TLS termination documentation, built-in security policies let you specify cipher suites and protocol versions to meet PCI compliance requirements at the load balancer level.

Load Balancer Types and SSL Support

SSL termination is a Layer 7 feature. Layer 4 load balancers operate at the TCP level and cannot decrypt traffic – they support SSL passthrough only. Layer 7 load balancers inspect HTTP headers after decryption, enabling content-based routing, WAF integration, and session persistence.

| Attribute | Layer 4 (TCP) – e.g. AWS NLB | Layer 7 (HTTP) – e.g. AWS ALB, Nginx, HAProxy |

| SSL termination | Yes (TLS passthrough or terminate) | Yes (full termination) |

| Content inspection | No | Yes – HTTP headers, URLs, cookies |

| WAF integration | Limited | Full WAF support |

| Typical use case | TCP/UDP load balancing, static IPs | Web apps, API gateways, HTTPS sites |

AWS Network Load Balancer (NLB) added TLS termination in 2019 as an exception – it operates at Layer 4 but offloads certificate handling to ACM. AWS ALB remains the standard choice for full Layer 7 termination with header inspection and WAF support.

What to Do Next

SSL termination at the load balancer reduces server CPU overhead, centralises certificate management, and enables Layer 7 features such as WAF filtering and content routing. The pattern is secure when the internal network is properly isolated or SSL bridging is applied to the backend segment.

Start with AWS ALB and ACM if you are on AWS infrastructure – certificate renewal is automated and the setup takes under ten minutes. For self-managed Nginx or HAProxy deployments, configure Certbot now to avoid manual renewal in 90 days. Once termination is live, use the SSL Checker Tool to verify your load balancer certificate is correctly presented and trusted by all browsers.

As of 2026, quantum-resistant TLS algorithms are entering early deployment phases. Watch for NIST post-quantum cipher suite additions to AWS security policies and Nginx/HAProxy releases – your termination layer will be the first place to adopt them without touching backend code.

Frequently Asked Questions About Load Balancers

What is the difference between SSL termination and SSL offloading?

SSL termination and SSL offloading refer to the same process. Both terms describe decrypting HTTPS traffic at a load balancer before forwarding requests to backend servers. “Offloading” emphasises moving CPU-intensive cryptographic work off the server pool; “termination” describes where the encrypted connection ends.

Does SSL termination expose data?

Traffic is decrypted at the load balancer and travels in plaintext to backend servers within your internal network. This is not a risk if that network is isolated. For environments where even internal plaintext is prohibited – such as PCI-DSS Level 1 – use SSL bridging or mutual TLS to re-encrypt the backend segment.

Can I use a free Let’s Encrypt certificate with a load balancer?

Yes. Install Certbot on the Nginx or HAProxy host and use the standalone or nginx plugin to obtain a 90-day certificate automatically. For AWS deployments, ACM provides free certificates with no expiry management required – renewal is fully automated. HAProxy users must concatenate cert + key into a .pem file after each renewal.

What port does SSL termination use?

Incoming HTTPS traffic arrives on port 443. After decryption, the load balancer forwards requests to backend servers on port 80 (or a custom port for re-encrypted traffic). The client browser always connects to the public HTTPS port 443 – the internal port is invisible to end users.

How does SSL termination improve performance?

SSL termination shifts TLS handshake processing from every backend server to a single termination point. Load balancers use connection pooling to reuse decrypted connections to backends, eliminating redundant handshakes. HTTP/2 multiplexing between the load balancer and backends further reduces latency. Backend servers gain CPU headroom that would otherwise be consumed by cryptographic operations.

Is SSL termination PCI-DSS compliant?

Yes, under most PCI-DSS scope interpretations – provided the internal segment between load balancer and backend servers is classified as a trusted, encrypted, or isolated network. PCI-DSS v4.0 requires encryption in transit; the scope boundary stops at the load balancer if the internal network is properly segmented. SSL bridging (re-encryption to backend) is the safest approach for Level 1 merchants where any unencrypted internal hop must be eliminated.

Priya Mervana

Verified Web Security Experts

Verified Web Security Experts

Priya Mervana is working at SSLInsights.com as a web security expert with over 10 years of experience writing about encryption, SSL certificates, and online privacy. She aims to make complex security topics easily understandable for everyday internet users.